Every few months, a new web scraping tool launches promising to make data extraction effortless. Point and click. No code required. Enterprise scale. Done in minutes.

Some of these tools are genuinely excellent — for the right use cases. The problem is that the marketing rarely tells you where they stop working. And for businesses with serious data requirements, that gap between what a tool promises and what it actually delivers in production can cost months of wasted time and budget.

This post is an honest review of the 10 most widely used web scraping tools in 2026. What each one is actually good at. Where each one breaks down. Real pricing. And a clear framework for understanding when none of them are the right answer — and what custom development delivers that no off-the-shelf tool can.

1. Scrapy

What it is: The most widely used open-source web scraping framework. Python-based, built for scale, maintained by a large community.

Best for: Developers who want full control over their scraping logic, need to build complex crawlers, and are comfortable writing and maintaining Python code.

Genuine strengths:

- Extremely powerful and flexible — you can build almost anything with it

- Built-in support for crawling, item pipelines, middleware and output formatting

- Scales well — designed for high-volume crawling

- Free and open-source, no per-request costs

- Huge community, extensive documentation, years of battle-testing

Real limitations:

- Does not handle JavaScript-rendered content — requires integration with a headless browser tool, which adds significant complexity

- Requires meaningful Python knowledge to use effectively

- No built-in proxy management, anti-bot handling or monitoring — you build these yourself

- Maintenance burden falls entirely on your team — when a site changes, you fix the scraper

Pricing: Free (open-source). Infrastructure and maintenance costs are your own.

Verdict: An excellent foundation for a developer-built solution, but it is a building block — not a complete solution. Treat it as a component of a custom build, not a replacement for one.

2. Playwright / Puppeteer

What it is: Browser automation libraries that control a real browser programmatically. Playwright and its predecessor Puppeteer are the standard tools for scraping JavaScript-rendered pages.

Best for: Scraping pages where content only exists after JavaScript executes — single-page applications, infinite scroll, dynamic content.

Genuine strengths:

- Can handle any JavaScript-rendered page — if a browser can load it, these can scrape it

- Supports multiple browsers (Playwright adds Firefox and Safari support)

- Can interact with pages — click buttons, fill forms, scroll, wait for elements

- Free and open-source

- Playwright in particular has excellent documentation and active development

Real limitations:

- Resource-heavy — each browser instance consumes significant memory and CPU

- Slow compared to HTTP-only scraping — full browser rendering takes time

- Default configurations are easily detected by bot protection systems

- Requires stealth patching and careful configuration to work against protected sites

- Not a complete solution on its own — needs proxy management, scheduling, storage and monitoring built around it

Pricing: Free (open-source). Infrastructure costs can be significant at scale due to resource requirements.

Verdict: The right tool for JavaScript-heavy pages, but only as part of a properly architected system. Running raw Playwright against protected sites without additional tooling will get you blocked quickly.

3. Octoparse

What it is: A no-code desktop and cloud scraping platform with a visual point-and-click interface. One of the most popular tools for non-technical users.

Best for: Non-developers who need to extract data from websites without writing code — small-scale projects, one-time data pulls, teams without engineering resource.

Genuine strengths:

- Genuinely no-code — point, click, extract

- Handles JavaScript rendering and infinite scroll

- Scheduled cloud scraping available

- Includes basic CAPTCHA solving and IP rotation

- Reasonably priced for small-scale use

Real limitations:

- Breaks frequently when site structures change — visual selectors are brittle

- Does not scale well to high volumes — not built for millions of records

- Cannot handle complex scraping logic, conditional extraction, or custom business rules

- Cannot integrate directly with most internal systems — requires manual data export

- No custom monitoring or alerting — you find out about failures when you check manually

Pricing: Free plan (limited); paid plans from around $75–$250/month.

Verdict: Good for quick, small-scale data needs. Falls apart for anything requiring reliability, scale or integration with existing systems.

4. Browse AI

What it is: An AI-powered no-code scraping tool that lets you train a bot by demonstrating what to extract in your browser. Popular for monitoring and recurring extraction tasks.

Best for: Non-technical users who need to monitor specific pages for changes — competitor prices, job listings, product availability.

Genuine strengths:

- Very low barrier to entry — train a bot in minutes

- AI adapts to minor site changes better than purely selector-based tools

- Good for monitoring tasks — alerts when data changes

- Pre-built templates for common scraping tasks

- Reasonable pricing for light use

Real limitations:

- Struggles with heavily protected sites — not built for enterprise anti-bot environments

- Credit-based pricing becomes expensive quickly at volume

- Limited customisation — you work within the tool's framework, not your own

- Data delivery options are limited — primarily exports and webhooks

- Not suitable for complex multi-step scraping flows

Pricing: Free plan available; paid plans from around $49–$249/month based on credits.

Verdict: An excellent entry point for simple monitoring tasks. Not a production-grade data pipeline solution.

5. Apify

What it is: A developer-focused cloud platform for building, running and sharing web scrapers — called "Actors." Large marketplace of pre-built scrapers for common targets.

Best for: Developers who want cloud infrastructure for their scrapers without managing servers, and teams who can use pre-built Actors for common data sources.

Genuine strengths:

- Excellent developer experience — well-documented, flexible SDK

- Large marketplace of pre-built scrapers for popular platforms

- Handles infrastructure, scheduling, proxy management

- Good monitoring and alerting built in

- Scales well for developer-built solutions

Real limitations:

- Pre-built Actors break when target sites update — and updates depend on the Actor author

- Pricing scales steeply at volume — compute units add up quickly for heavy workloads

- Requires solid JavaScript knowledge to build custom Actors effectively

- Vendor lock-in — your scrapers live on their platform

- For proprietary or niche data sources, no pre-built Actor exists and you're building from scratch anyway

Pricing: Free tier available; paid plans from $49/month, scaling with usage. Production workloads typically run $200–$500+/month.

Verdict: A solid platform for developer-built scrapers. The marketplace is useful for common targets, but for anything bespoke you're doing custom development on their platform — which raises cost and adds dependency.

6. Zyte (formerly Scrapinghub)

What it is: An enterprise-focused managed web scraping platform. One of the oldest and most established names in the industry, built on top of Scrapy.

Best for: Large enterprises that need managed, compliant, high-volume data extraction with SLA-backed reliability.

Genuine strengths:

- Enterprise-grade reliability and compliance — serious infrastructure

- Managed service — they handle the scraping, you consume the data

- AI-powered extraction that adapts to site changes

- Strong compliance and data governance features

- Long track record and deep expertise

Real limitations:

- Expensive — enterprise pricing puts it out of reach for most SMBs and startups

- Coverage is strongest for common domains — proprietary or niche sources may not be supported

- Less flexibility for highly custom data requirements

- Onboarding and setup timelines can be long

Pricing: Enterprise pricing, typically starting from $1,000–$5,000+/month for managed services.

Verdict: The right answer for very large enterprises with standard data requirements and the budget to match. Overkill and overpriced for most businesses.

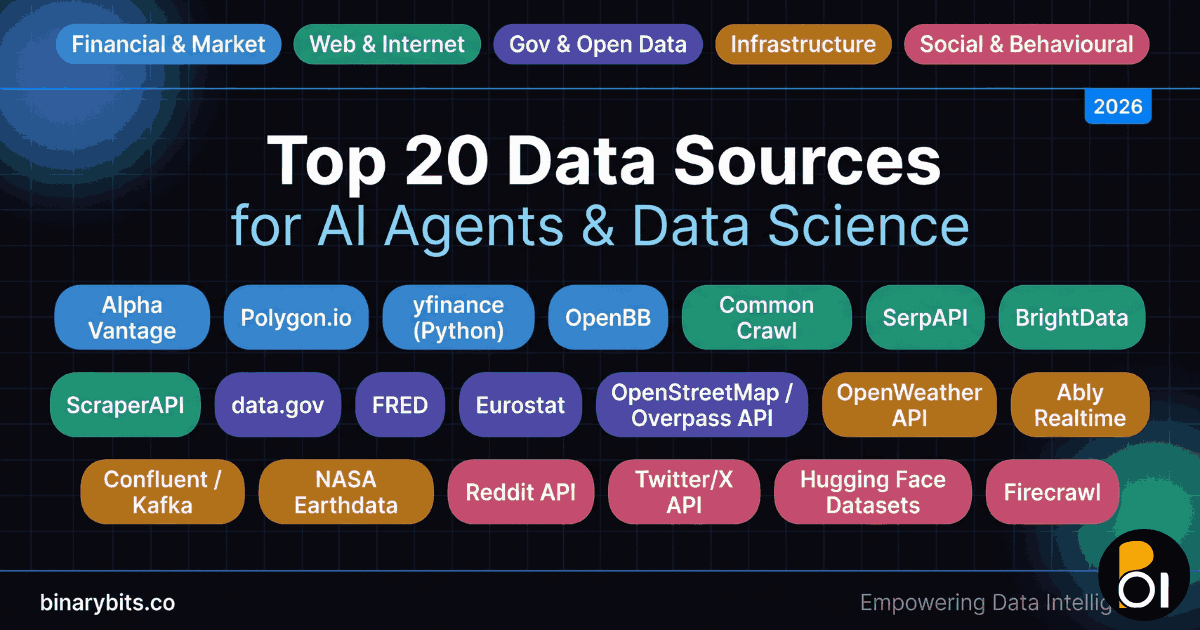

7. Firecrawl

What it is: An AI-powered scraping tool focused on converting websites into clean, structured content — particularly Markdown and JSON output for AI and LLM workflows.

Best for: AI developers who need to feed website content into language models, RAG systems or AI pipelines.

Genuine strengths:

- Excellent at converting messy web content into clean, structured output

- Fast and reliable for content-focused extraction

- Native integration with AI development workflows

- Simple API that's easy to integrate

Real limitations:

- Very expensive per credit — commercially impractical for high-volume production use

- Not designed for structured data extraction from complex or protected sites

- Content-focused rather than data-focused — best for text, not tabular or structured data

- Limited customisation for specific data schemas

Pricing: Free plan (500 credits); paid from $16–$333/month. Per-credit costs make scale expensive.

Verdict: Genuinely useful for AI content pipelines. Not suitable as a general-purpose data extraction solution at any meaningful volume.

8. WebHarvy

What it is: A desktop web scraping application for Windows and Mac with a visual point-and-click interface and one-time purchase pricing.

Best for: Individual users or small businesses who need occasional scraping, want to own their tool outright, and don't want monthly subscription costs.

Genuine strengths:

- One-time purchase — no ongoing subscription

- Data stays local — no third-party cloud access

- Intelligent pattern detection for lists and tables

- No coding required

- Good value for occasional, small-scale use

Real limitations:

- Desktop-only — no cloud scheduling or remote execution

- Cannot run automated, unattended scraping at scale

- Limited anti-bot capabilities

- Not suitable for integration with data pipelines or downstream systems

- Breaks on heavily JavaScript-dependent sites

Pricing: Around $129 one-time licence.

Verdict: Fine for ad-hoc manual data collection. Not a production tool for any business that needs reliable, automated, ongoing data delivery.

9. Import.io

What it is: An enterprise data platform that combines web scraping with data management — extraction, transformation, monitoring and delivery in one platform.

Best for: Large enterprises that need a managed end-to-end data solution with governance and compliance built in.

Genuine strengths:

- End-to-end platform — extraction through to delivery and monitoring

- Strong data governance and compliance features

- Handles large-scale, recurring extraction reliably

- Managed service reduces internal maintenance burden

Real limitations:

- Enterprise pricing — significant cost for smaller businesses

- Less flexible for highly custom requirements

- Coverage depends on their supported source library

- Long implementation timelines for complex setups

Pricing: Enterprise-only pricing, typically $2,000+/month.

Verdict: A serious platform for enterprises with serious budgets. Not appropriate for the majority of businesses with web scraping needs.

10. Data Miner (Browser Extension)

What it is: A browser extension that lets you scrape data directly from web pages into a spreadsheet, without leaving your browser.

Best for: Researchers, marketers and analysts who need to pull data from specific pages occasionally and export to a spreadsheet.

Genuine strengths:

- Zero setup — installs as a browser extension in seconds

- Free for basic use

- Community recipes for common websites

- Easy CSV export

- Good for one-off research tasks

Real limitations:

- Manual operation — you have to be at the browser running it

- No automation, no scheduling, no pipeline

- Not suitable for any ongoing or automated data collection

- Limited to what you can access in a normal browser session

- Not scalable beyond a handful of pages at a time

Pricing: Free for basic use; paid plans from $19–$49/month for cloud features.

Verdict: Useful for a researcher pulling data manually once. Not relevant for any business data operation.

The Honest Comparison: Tools vs Custom Development

Having reviewed the 10 most popular tools, a clear pattern emerges. Each tool makes specific trade-offs — between flexibility and ease of use, between cost and control, between speed of setup and long-term reliability. Understanding these trade-offs is the key to making the right choice for your situation.

Here is how the two approaches compare across every dimension that matters for a production data operation:

| What Matters | Off-the-Shelf Tools | Custom Development |

|---|---|---|

| Speed to start | ✅ Minutes to hours | ⏱️ 1–4 weeks |

| Handles any site | ❌ Limited to supported sources | ✅ Any publicly accessible site |

| Handles protected sites | ⚠️ Basic protection only | ✅ Full anti-detection tooling |

| Custom data schema | ❌ Output is fixed or limited | ✅ Exactly the fields you need |

| System integration | ⚠️ Export only, or basic webhooks | ✅ Direct database, API, ERP, CRM |

| Scale (records/day) | ⚠️ Thousands to low millions | ✅ Unlimited — designed to spec |

| Ongoing cost at scale | ❌ Grows steeply with volume | ✅ Fixed maintenance cost |

| Vendor dependency | ❌ Pricing changes, service outages | ✅ You own the infrastructure |

| Monitoring & alerting | ⚠️ Basic or none | ✅ Custom alerts, health checks |

| Maintenance when site changes | ❌ Your problem to detect and fix | ✅ Caught by monitoring, fixed by team |

| Ideal for | One-off tasks, small volume, standard sources | Production pipelines, large volume, custom sources |

When Off-the-Shelf Tools Stop Working

The tools reviewed above are the right answer for a specific set of circumstances. They are genuinely the wrong answer for most business-grade data requirements. Here are the specific situations where no off-the-shelf tool will serve you well.

1. Your target sites are heavily protected.

Enterprise-grade bot protection uses TLS fingerprinting, behavioural analysis and machine learning models that identify scrapers based on how they behave — not just what IP address they use. Generic scraping tools use standard request patterns that protection systems recognise and block. A properly built custom scraper addresses each detection layer specifically, with stealth browser configuration, human-like request timing, and monitoring that catches blocks early.

2. You need data from sources the tools don't support.

The pre-built scrapers and templates in tools like Apify and Browse AI work well for common platforms. If your target is an industry-specific directory, a proprietary portal, a niche marketplace, or a competitor's bespoke website, no pre-built template exists. You are building custom logic whether you use a platform or not — and building it on a platform's infrastructure adds cost and dependency without adding capability.

3. The data needs to flow into your internal systems.

Most tools deliver data via CSV export, basic webhooks, or integrations with a handful of popular platforms. If you need data delivered directly into a proprietary database, an internal ERP, a custom CRM, or a pricing engine — you need a custom pipeline. No tool handles this out of the box.

4. You need custom data cleaning, enrichment or transformation.

Raw scraped data is almost never production-ready. Different sources use different field names, different date formats, different units, different conventions. A production pipeline includes a cleaning and normalisation layer that maps all sources to a consistent internal schema. Tools provide limited or no customisation of this layer.

5. Volume makes tool pricing unworkable.

The per-request or credit-based pricing of most SaaS scraping tools looks affordable at small scale. At production volume — hundreds of thousands or millions of records per month — the monthly cost of a SaaS tool frequently exceeds the cost of a custom-built pipeline with fixed maintenance. One of our clients was paying over £4,000/month for a SaaS scraping service handling 2 million records. Their custom pipeline costs under £400/month to maintain.

6. You need reliability that a business can depend on.

A scraper that works 80% of the time is not production infrastructure — it is a source of inconsistent data that erodes trust in every downstream decision made using it. Production pipelines need monitoring, alerting, automatic retry logic, health checks and human escalation paths. None of the consumer or mid-market tools provide this level of operational reliability out of the box.

How to Choose the Right Approach

The decision between a tool and custom development comes down to three questions.

Is your data source supported by a pre-built tool? If yes, and the volume is low, start with a tool. If no, you are building custom logic regardless — the question is whether you do it on a platform or own your infrastructure.

What volume do you need? Under 50,000 records per month, most tools are cost-effective. Above that threshold, the cost equation typically shifts in favour of a custom pipeline. Above 500,000 records per month, custom development is almost always cheaper to operate.

Does the data need to connect to internal systems? If yes, a custom pipeline is almost certainly the right answer. The integration complexity alone typically makes tools impractical.

What a Custom web scraping Pipeline Actually Looks Like

A professionally built custom scraping system is not just a script with a few extra features. It is a production-grade data infrastructure with five distinct layers:

Extraction layer — Custom-built scrapers for each target source, using the right tool for each: direct HTTP requests where content is server-rendered, headless browser automation with stealth configuration where JavaScript rendering is required, direct API calls where endpoints are accessible. Each scraper is built to handle the specific anti-bot profile of that source.

Normalisation layer — Every source returns data in its own format. The normalisation layer maps all of them to a consistent internal schema — standardised field names, consistent data types, uniform date formats, cleaned text. Records that fail validation are quarantined rather than silently stored with bad data.

Storage layer — Raw data is stored immediately after extraction, before normalisation, so it can be reprocessed if needed. Processed data is stored in a structured database optimised for the downstream use case, with appropriate indexes for query performance at scale.

Monitoring layer — Every pipeline run produces a structured log. Record counts are compared against historical baselines. Success rates are tracked. Alerts fire when something changes — immediately, not the next time someone checks manually.

Delivery layer — Data is delivered in whatever format and via whatever mechanism the downstream system requires: scheduled files, REST API, direct database writes, webhook push. The delivery layer is configured to the client's exact requirements, not constrained by what a tool supports.

The Takeaway

The tools covered in this post are not bad products. Several of them are excellent at what they do. The problem is not the tools — it is using them outside the use cases they were designed for.

For a researcher pulling data once a week, a browser extension is perfectly adequate. For a marketing team monitoring a handful of competitor pages, a no-code tool is a reasonable choice. For a business that needs reliable, high-volume, integrated data infrastructure that the organisation depends on — none of the tools in this list are the right answer.

The businesses extracting the most value from web data in 2026 are not the ones that found the best SaaS scraping tool. They are the ones that built the right infrastructure for their specific requirements — and maintained it as both the data sources and their own needs evolved.

If you are evaluating whether a custom scraping pipeline is the right approach for your situation — or if you have already tried the tools and found their limits — we are happy to give you an honest assessment. We work through your specific requirements, tell you clearly whether a tool would serve you well or whether custom is the right answer, and if it is custom, we scope and price it transparently.