Most data projects fail before they start, not because the model is wrong or the agent logic is broken, but because the data feeding them is stale, noisy, or missing entirely.

We've seen this across 200+ projects at BinaryBits. A startup founder wants to build an AI-powered competitor tracker. A CTO wants real-time market signals for a trading dashboard. An ops team wants to automate a workflow that depends on live inventory levels. The first hard question in every case: where does the data actually come from?

💡 What you'll get from this post: 20 specific data sources, with direct links, what they offer, API access details, and exactly how they're useful for data science pipelines, AI agents, and software MVPs. No generic "use public data" advice. Real sources, real use cases.

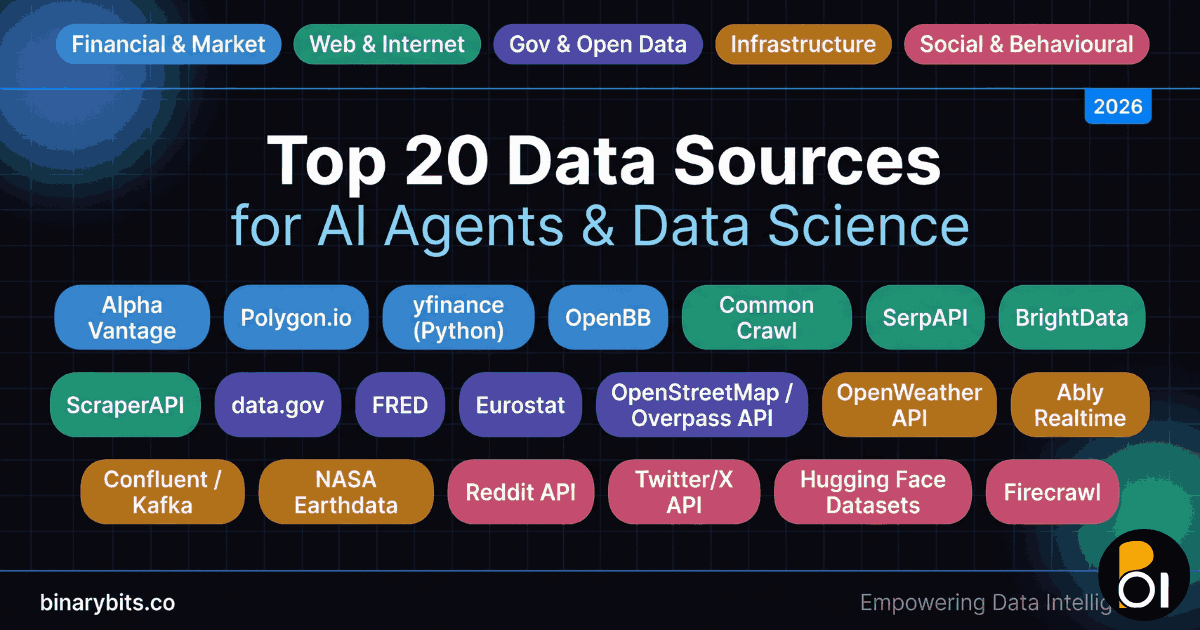

We've split these into five categories so you can jump to what's relevant: financial & market data, web & public internet data, government & open data, infrastructure & real-time streams, and social & behavioural data.

Why Your Data Source Choice Defines Your Project

Before the list, a quick framework. Every data source you pick makes three bets simultaneously:

- Freshness: Is it real-time, near-real-time (seconds–minutes), or batch (daily/weekly)?

- Reliability: Does the source have SLA guarantees, rate limits, or uptime risks?

- Legality & licensing: Can you build a commercial product on top of it?

Getting this wrong at the start means rebuilding your data layer six months in. We've done that once. Not fun.

Now, the 20 sources.

Category 1: Financial & Market Data

1. Alpha Vantage, alphavantage.co

Alpha Vantage provides free and premium REST APIs for equities, forex, crypto, and technical indicators. The free tier gives you 500 API requests per day. Premium plans start at $50/month and unlock real-time intraday data with no throttling.

How it's useful: If you're building a financial dashboard MVP, a portfolio monitoring agent, or training a price-prediction model, Alpha Vantage is the fastest way to get structured OHLCV (open/high/low/close/volume) data into your pipeline. JSON responses are clean enough to pipe directly into pandas or a time-series database without heavy transformation.

2. Polygon.io, polygon.io

Polygon is a professional-grade market data provider with WebSocket streams for real-time tick data, REST endpoints for historical data, and an options data feed. Their Starter plan begins at $29/month. Enterprise clients get full market replay, every trade, every quote, reconstructed millisecond by millisecond.

How it's useful: For AI agents that need to react to live market events, say, an agent that monitors unusual options activity and fires alerts, Polygon's WebSocket API is the right tool. Latency is typically under 10ms from the exchange.

3. Yahoo Finance (via yfinance Python library), pypi.org/project/yfinance

yfinance is an unofficial Python wrapper around Yahoo Finance's data endpoints. It's free, requires no API key, and covers equities, ETFs, mutual funds, and crypto across global markets. The library is maintained actively and handles rate limiting internally.

How it's useful: For prototyping and early-stage data science MVPs, yfinance gets you from zero to structured historical data in 4 lines of Python. It's not for production at scale, Yahoo Finance doesn't offer SLAs, but for validating a hypothesis fast, nothing beats it for speed of setup.

4. OpenBB, openbb.co

OpenBB is an open-source investment research platform with a Python SDK that aggregates data from 50+ providers including FRED, SEC EDGAR, Intrinio, and Tiingo. The SDK is free. Some underlying provider data requires separate API keys.

How it's useful: When you need fundamental analysis data (balance sheets, earnings, analyst estimates) alongside price data in a single unified interface, OpenBB removes 80% of the data-wrangling work. It's particularly strong for building analyst-assist AI agents that need to pull multiple financial data types in one call.

Category 2: Web & Public Internet Data

5. Common Crawl, commoncrawl.org

Common Crawl maintains a free, open dataset of petabyte-scale web crawl data, over 3.4 billion web pages crawled monthly. The data is stored on AWS S3 in WARC format and can be queried via Athena or downloaded directly. It's free to access; you pay only for S3 egress costs.

How it's useful: For training NLP models, building text classification datasets, or doing large-scale SEO and content analysis, Common Crawl is the foundation that many LLMs were trained on. If your MVP involves analysing what the public internet says about a topic across millions of pages, this is your starting point.

6. SerpAPI, serpapi.com

SerpAPI provides structured JSON results from Google, Bing, DuckDuckGo, YouTube, and 15+ other search engines. Plans start at $50/month for 5,000 searches. It handles anti-bot detection, CAPTCHAs, and rendering, you just get clean structured results.

How it's useful: For AI agents that need to answer questions using real-time search results (rather than training data), SerpAPI is the clean production-grade solution. It's also excellent for competitive intelligence MVPs, automatically tracking where competitors rank for specific keywords week over week.

7. BrightData (formerly Luminati), brightdata.com

BrightData is a commercial data collection platform with a 72M+ IP proxy network, pre-built datasets for e-commerce, social media, and job boards, and a no-code scraping browser. Pricing is usage-based, starting around $500/month for serious data volumes.

How it's useful: When you need structured data from sites that actively block scrapers, Amazon, LinkedIn, Instagram, Glassdoor, BrightData's pre-built datasets let you bypass the scraping infrastructure entirely and get clean JSON. For MVPs that require competitive pricing data or job market intelligence at scale, it's the most reliable commercial option available.

💡 If you're building a product that needs structured data from the web at scale, whether that's for an AI agent, a real-time dashboard, or a data-enrichment pipeline, BinaryBits delivers 50,000+ clean data records daily from 20,000+ sources. We handle the scraping infrastructure so you don't have to.

8. ScraperAPI, scraperapi.com

ScraperAPI is a proxy and rendering API that handles IP rotation, browser rendering, and CAPTCHA solving behind a single endpoint. You send a URL; it returns the HTML. Free plan gives 1,000 requests/month. Paid plans start at $49/month for 100,000 requests.

How it's useful: For data science teams that want to build their own scraping logic without managing proxy infrastructure, ScraperAPI is the most developer-friendly option. Feed it a URL inside your Python or Node.js code, get the rendered HTML back. Then parse it yourself. It's particularly good for MVPs where the scraping logic is custom but the infrastructure needs to be reliable.

Category 3: Government & Open Data

9. data.gov (US Government Open Data), data.gov

data.gov hosts 300,000+ datasets from US federal agencies covering agriculture, climate, education, finance, health, and transport. All data is free. Formats include CSV, JSON, XML, and APIs depending on the agency.

How it's useful: For civic tech MVPs, public health analytics tools, or economic research agents, data.gov is one of the few sources where the data is both authoritative and free for commercial use. FEMA disaster data, FDA recall data, and BLS employment data are three specific feeds that power real production applications.

10. FRED (Federal Reserve Economic Data), fred.stlouisfed.org

FRED hosts 800,000+ economic time-series datasets from 100+ national and international sources. The API is free with registration. Data includes GDP, inflation (CPI/PCE), interest rates, unemployment, housing starts, and more, updated as frequently as the source agencies release it.

How it's useful: For any AI agent or analytics product that needs macroeconomic context, a credit risk model, a market-timing agent, an economic forecasting dashboard, FRED is the most comprehensive free source of structured economic data on the internet. The Python library fredapi makes integration straightforward.

11. Eurostat, ec.europa.eu/eurostat

Eurostat is the EU's statistical office, publishing data on economics, population, trade, employment, and more across 27 EU member states. All data is free. The REST API returns JSON and SDMX formats. Data is updated quarterly to annually depending on the indicator.

How it's useful: If your product targets the EU market or your analysis requires cross-country European comparison, Eurostat is the authoritative source. EU e-commerce analytics tools, ESG data products, and immigration trend models all commonly use Eurostat as a primary data layer.

12. OpenStreetMap (Overpass API), wiki.openstreetmap.org

OpenStreetMap (OSM) is a crowdsourced world map with geospatial data on roads, buildings, POIs, land use, waterways, and more. The Overpass API lets you query the dataset programmatically. It's free under the ODbL licence, which allows commercial use with attribution.

How it's useful: For location-based MVPs, delivery routing apps, real estate tools, hyperlocal analytics platforms, OSM provides geospatial infrastructure that would cost thousands per month from Google Maps. An AI agent analysing foot traffic patterns, identifying underserved neighbourhoods, or routing field service teams can be built on OSM data for near-zero data cost.

Category 4: Infrastructure & Real-Time Streams

13. OpenWeather API, openweathermap.org/api

OpenWeather provides current conditions, hourly and daily forecasts, historical weather, and alerts for any global location. The free tier gives 1,000 API calls per day. Paid plans start at $40/month for higher volumes and one-call API access.

How it's useful: Weather data is a powerful exogenous variable in many prediction models. Retail demand forecasting, logistics optimisation, agriculture monitoring agents, and energy consumption predictions all benefit from integrating real-time weather signals. OpenWeather is the most widely used API for this, documented in every major language.

14. Ably Realtime, ably.com

Ably is a managed real-time messaging infrastructure platform, WebSockets, Server-Sent Events, MQTT, and HTTP streaming in one service. The free tier gives 6 million messages per month. Production plans start at $39/month.

How it's useful: For MVPs that need to push live data to a browser or mobile client, a trading dashboard, a live analytics feed, a real-time collaboration tool, Ably handles the WebSocket infrastructure so you don't have to. Your AI agent can publish events to Ably; your frontend subscribes and reacts instantly. No ops overhead.

15. Confluent / Apache Kafka (Confluent Cloud), confluent.io

Confluent Cloud is the managed version of Apache Kafka, a distributed event streaming platform capable of handling millions of events per second. Confluent Cloud has a free tier. Production workloads start at around $0.11 per CKU/hour.

How it's useful: When your data science pipeline needs to ingest, process, and route large volumes of real-time events, clickstreams, IoT sensor data, financial ticks, application logs, Kafka is the industry-standard backbone. AI agents that need to act on streaming events (rather than batch data) are built on top of Kafka or a managed equivalent like Confluent.

16. NASA Earthdata, earthdata.nasa.gov

NASA Earthdata provides free access to satellite imagery, climate models, atmospheric data, and oceanographic datasets. Registration is free. Data is available via HTTPS, OPeNDAP, and web APIs. Coverage spans decades of earth observation data.

How it's useful: For climate tech MVPs, agricultural monitoring tools, insurance risk models, and environmental AI agents, NASA's satellite data is unmatched in depth and free of cost. Vegetation indices (NDVI), land surface temperature, flood mapping, and wildfire detection data are all available and actively used in production analytics applications.

Category 5: Social & Behavioural Data

17. Reddit Data (Pushshift / Reddit API), reddit.com/dev/api

Reddit's official API provides access to posts, comments, subreddits, and user data. The free tier allows 100 API calls per minute. For research at scale, the Pushshift archive (available via academic data partnerships) covers years of historical Reddit data.

How it's useful: Reddit is one of the richest sources of authentic public opinion data on the internet. Sentiment analysis models, topic discovery agents, brand monitoring tools, and social listening dashboards all commonly ingest Reddit data. The r/WallStreetBets to stock price correlation research that emerged in 2021 is the most famous example of what's possible.

18. Twitter/X API (Academic Track), developer.x.com

The X (formerly Twitter) API v2 provides access to tweets, user profiles, trends, and conversation threads. The Basic tier is $100/month for 10,000 tweets/month read access. The Pro tier at $5,000/month unlocks full archive search and streaming endpoints.

How it's useful: Real-time social listening, crisis monitoring agents, political sentiment trackers, and brand intelligence tools depend on Twitter/X data as a primary signal. Despite the pricing increases since 2023, it remains the most real-time public signal dataset available, events that are hours away from mainstream news can be detected in Twitter data minutes after they happen.

19. Hugging Face Datasets, huggingface.co/datasets

Hugging Face hosts 100,000+ open datasets across NLP, computer vision, audio, tabular, and multimodal domains. Datasets are free to download via the datasets Python library. Many include train/test splits, data cards, and example notebooks.

How it's useful: For AI and ML teams building or fine-tuning models, Hugging Face Datasets eliminates weeks of dataset sourcing and cleaning work. Whether you're fine-tuning an LLM on domain-specific text, training a sentiment classifier, or building a question-answering system, the dataset you need is almost certainly already on Hugging Face with documented structure and known biases.

20. Firecrawl, firecrawl.dev

Firecrawl is a modern web-to-Markdown extraction API built specifically for AI applications. You send it a URL or a site, and it returns clean Markdown, removing nav bars, ads, boilerplate, and rendering JS-heavy pages. The free tier gives 500 credits. Paid plans start at $16/month.

How it's useful: For AI agents that need to read and act on web content, research agents, content summarisers, RAG (retrieval-augmented generation) systems that pull live context from external URLs, Firecrawl is the cleanest way to turn any webpage into an LLM-ready text format. It's specifically designed for AI pipelines, not general scraping, which means the output quality is consistently higher than browser-rendered HTML.

How to Choose the Right Data Source for Your Project

Not every source here is right for every project. Here's the honest decision framework we use at BinaryBits when scoping data infrastructure:

| If your project needs… | Start with… |

|---|---|

| Real-time financial signals | Polygon.io (production) or Alpha Vantage (prototyping) |

| Web-scale text for NLP training | Common Crawl + Hugging Face Datasets |

| Live web data from protected sites | BrightData (commercial) or custom scraping via BinaryBits |

| Economic & government statistics | FRED + data.gov (US) or Eurostat (EU) |

| Real-time event streaming | Confluent/Kafka for volume; Ably for client-facing push |

| AI agent web browsing/reading | Firecrawl + SerpAPI in combination |

| Social sentiment signals | Reddit API (cost-effective) or X API (real-time premium) |

| Geospatial / location data | OpenStreetMap + NASA Earthdata for environmental layers |

When None of These Are Enough

Public APIs and open datasets cover a huge range of use cases. But there are three situations where they fall short:

- The data doesn't exist publicly. Niche industry data, proprietary competitor data, internal operational data, none of this is on a public API. You need either a custom scraping pipeline or a data partnership.

- The volume or frequency exceeds free tier limits. Processing 500,000 web pages per day, or ingesting 1 million financial ticks per hour, moves you into infrastructure territory that requires real engineering decisions, not just API calls.

- The data needs cleaning and structuring before it's usable. Raw HTML, inconsistent government CSV formats, multi-language social media posts, these need a data engineering layer before they become useful training data or agent inputs.

This is exactly the type of work BinaryBits handles for clients. We've built pipelines that collect 50,000+ structured records daily from sources that don't have clean APIs, job boards, real estate portals, e-commerce catalogues, LinkedIn profiles, and deliver them in formats ready for analytics or AI workflows.

Need a reliable data pipeline for your project?

Whether you're building a data science dashboard, training an AI agent, or launching a data-driven MVP, we can help you get the right data, in the right format, at the right frequency.

Get a Free Data Sample → Book a Free Call →Frequently Asked Questions

What is the best free data source for building an AI agent in 2026?

For AI agents that need to read and act on web content, Firecrawl (free tier: 500 credits) and SerpAPI are the most purpose-built options. For knowledge-base and training data, Hugging Face Datasets offers 100,000+ free open datasets across text, image, and tabular domains. For economic and government data, FRED and data.gov are authoritative and completely free.

Which data sources work best for real-time data science pipelines?

For real-time pipelines, Polygon.io (WebSocket tick data), OpenWeather API (live weather), and Confluent/Kafka (event streaming) are the three most commonly combined. The right choice depends on your data domain: financial ticks need Polygon; IoT/application events need Kafka; environmental signals need OpenWeather or NASA Earthdata.

What data sources are best for an MVP with a small budget?

For budget-constrained MVPs: yfinance (free, no API key) for financial data, data.gov and FRED (both free) for economic data, OpenStreetMap (free) for geospatial data, and Hugging Face Datasets (free) for ML training data. Reddit's API free tier is also generous enough to power a sentiment analysis MVP. Most early-stage products can be validated on these free sources before paying for commercial APIs.

Can I use web scraping to build my own data source for an AI product?

Yes, and for many products, custom web scraping is the only way to get the specific data you need. The key considerations are: (1) check the site's robots.txt and terms of service; (2) use a reliable proxy infrastructure for sites that block scrapers; (3) implement rate limiting to avoid overloading target servers. Tools like ScraperAPI and BrightData handle the infrastructure; if you need a custom pipeline at scale, BinaryBits builds and maintains scraping infrastructure delivering 50,000+ records daily for clients across 10+ countries.