You're a startup founder with 8 people doing the work of 25. Customer emails are piling up. Sales research is manual and slow. Your ops team spends three hours a day copy-pasting data between tools. You've heard that AI agents can fix this — but every article you read is either a vendor pitch or so technical it might as well be written for a PhD thesis.

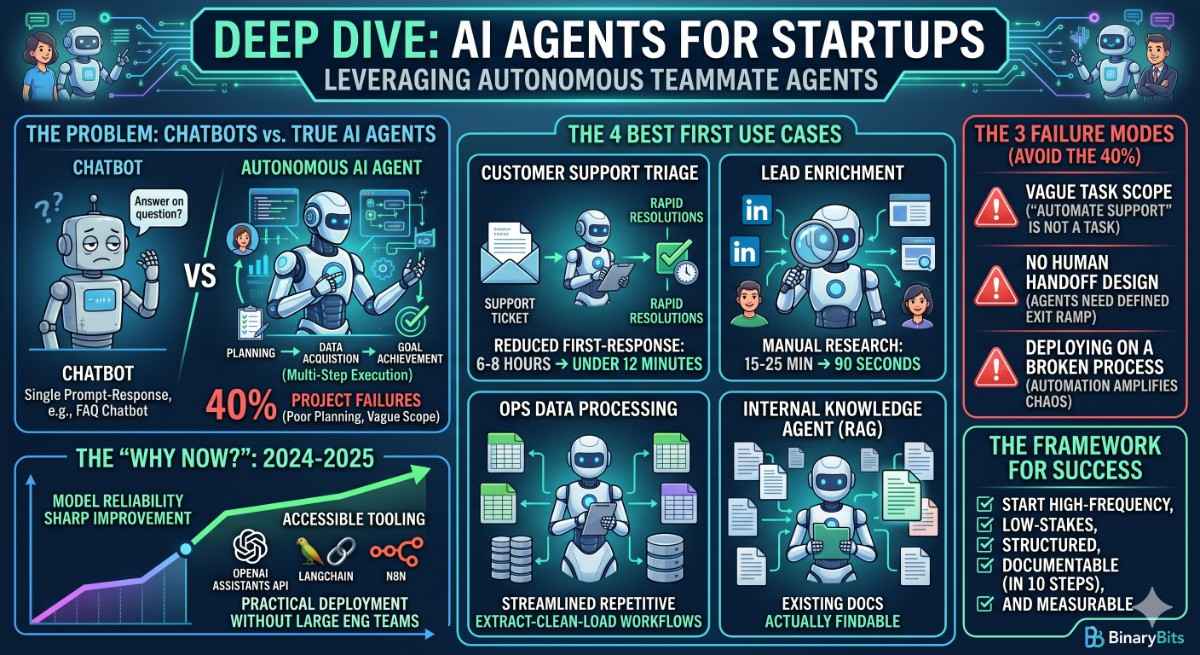

Here's the actual problem: 40% of AI agent projects fail before they deliver any value. Not because the technology doesn't work. Because companies deploy agents without defining what problem they're solving, what "done" looks like, or who owns the output.

💡 What you'll get from this post: A practical guide to deploying AI agents as real "teammates" in a startup — with specific use cases, a framework for deciding where to start, and the mistakes that kill 4 in 10 projects before they produce results.

TL;DR

- AI agents are not chatbots. They execute multi-step tasks autonomously — reading, deciding, acting, and reporting back.

- The best first deployments for startups: customer support triage, lead enrichment, ops data processing, and internal knowledge retrieval.

- 40% of projects fail due to three avoidable mistakes: vague task scope, no human handoff design, and deploying on broken processes.

- You don't need to build everything custom. n8n, LangChain, and OpenAI Assistants handle most early-stage cases well.

- Start with one agent, one workflow, and one success metric. Expand from there.

What an AI Agent Actually Is (And What It Isn't)

Most founders encounter the term "AI agent" and picture a chatbot with a slightly bigger vocabulary. That's not what we're talking about.

A chatbot responds to a prompt. You ask it something, it answers. Every turn starts fresh. An AI agent executes a goal. It breaks the goal into steps, uses tools to complete those steps, checks its own output, adjusts if something goes wrong, and reports the result — all without you managing each step manually.

The technical term is multi-step reasoning with tool use. The practical translation: an agent can read your CRM, check the company's website, pull recent news about the prospect, write a personalised outreach email, and log it in your task manager — all in one run, triggered by a single event.

That's the difference. Not smarter answers. Autonomous execution of a workflow that previously required a human to string together five different tools.

Why 2026 Is the Year Startups Can Actually Use This

Two things changed in the last 18 months that make agents genuinely practical for startups with small teams and limited engineering budgets.

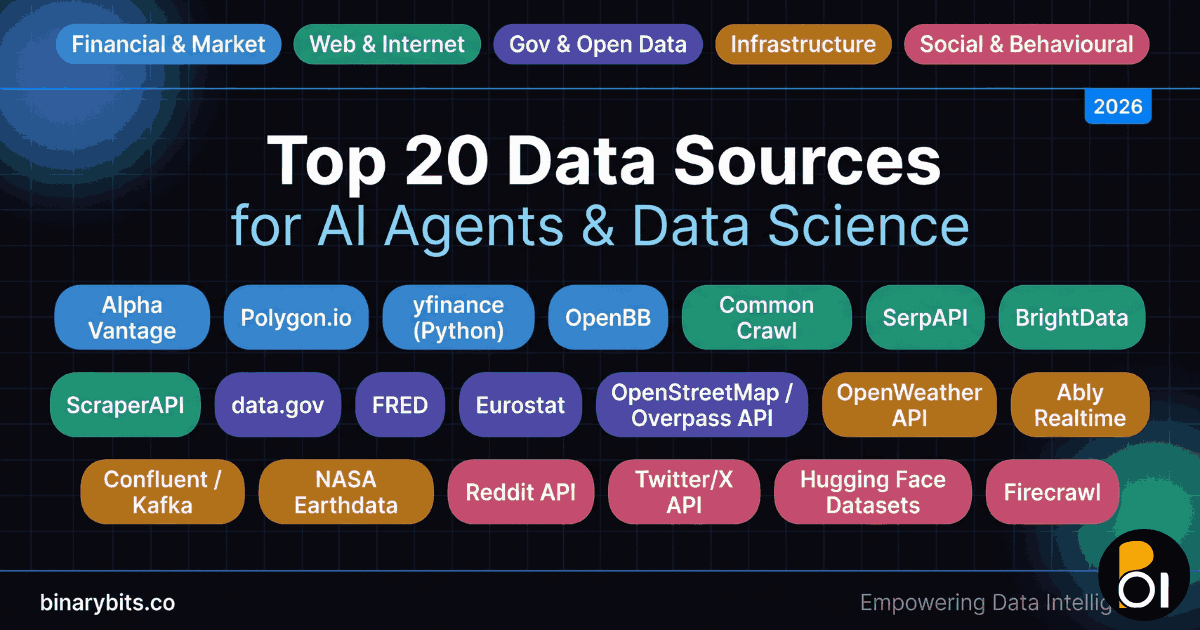

First, model reliability improved sharply. Early agents (2023–2024) hallucinated tool calls, got stuck in loops, and produced outputs you couldn't trust without heavy validation. GPT-4o, Claude 3.5, and the open-source models that followed made reliable multi-step execution realistic outside of a controlled lab setting.

Second, the tooling layer matured. Tools like n8n, LangChain, and OpenAI Assistants API mean a startup doesn't need to build orchestration infrastructure from scratch. A senior engineer can wire up a working agent prototype in 2–3 days. That was 3–6 weeks of work two years ago.

The window is real. The question is where to aim it first.

The 4 AI Agent Use Cases That Actually Work for Startups

We've built AI agent workflows across customer support, sales research, operations, and internal tooling. The four use cases below consistently deliver measurable results within 30–60 days of deployment — which matters when you're a startup and every engineering hour needs to justify itself.

1. Customer Support Triage Agent

The agent reads incoming support tickets, classifies them by type and urgency, pulls the relevant customer data from your CRM, drafts a response using your knowledge base, and routes tickets it can't confidently handle to a human — flagged with context already filled in.

What this replaces: 2–4 hours per day of a human reading and sorting tickets, pulling CRM context manually, and writing templated responses. In our AI customer support case study, this pattern reduced first-response time from 6–8 hours to under 12 minutes for 73% of ticket types.

What it doesn't replace: escalated support, complex relationship management, and situations where empathy and discretion matter. Build in a clear human handoff condition from day one.

2. Lead Enrichment Agent

The agent takes a new lead (name, company, email) and enriches it automatically: company size, funding stage, tech stack, recent news, relevant LinkedIn activity. It scores the lead based on your ICP criteria and drops a summary into your CRM before your sales rep picks up the phone.

Manual lead research takes 15–25 minutes per lead. An enrichment agent does the same job in 90 seconds. Across 50 new leads per week, that's 12–20 hours recovered — plus the rep actually reads the brief because it's already there waiting for them.

We documented a version of this in our lead enrichment agent case study. The stack was straightforward: OpenAI Assistants API + web fetch tools + HubSpot integration via REST API.

3. Ops Data Processing Agent

This one is less glamorous but often the highest-ROI deployment. Most startups have at least one manual data process: pulling reports from one tool, cleaning them, copying values into another tool, and sending a summary to someone. This takes 2–5 hours per week and nobody enjoys doing it.

An ops agent handles the entire loop: extract → clean → transform → load → summarise → notify. It runs on a schedule, flags anomalies, and only needs human attention when something is actually wrong. We built one of these using n8n + Python for a client processing 50,000+ records per week — replacing what had been a 6-person manual review process.

4. Internal Knowledge Agent (RAG)

Startups move fast and document slowly. By the time you have 15 people, nobody can find the answer to "what was the decision on the pricing model" or "how do we handle enterprise contract negotiations" without asking someone who was in the room.

A RAG (retrieval-augmented generation) agent indexes your internal docs, Notion pages, Slack history, and meeting notes — and answers questions accurately, with citations to the source. It doesn't replace documentation. It makes the documentation you have actually accessible.

This is one of the most underrated first deployments for startups scaling from 10 to 50 people. The productivity drag from context loss during that growth stage is significant.

Why 40% of AI Agent Projects Fail (And How to Not Be That 40%)

We see the same three failure modes repeatedly. None of them are technical.

Failure Mode 1: Vague Task Scope

The brief was "automate our customer support." That's not a task scope. That's a department. An agent needs a specific, bounded workflow with a clear start event, a defined set of steps, and an unambiguous success condition.

What we thought: We can figure out the edge cases as we go.

What actually happened: The agent handled 60% of cases fine. The other 40% hit edge cases nobody had defined. The agent either failed silently or did something wrong with enough confidence that nobody caught it for two weeks.

What to do instead: Before writing a line of code, document the 10 most common scenarios the agent will encounter and the exact expected behaviour for each.

Failure Mode 2: No Human Handoff Design

Every agent needs a defined exit ramp — a condition under which it stops, flags the situation, and hands off to a human with context intact. Teams that skip this end up with agents that either fail silently or make confident mistakes nobody catches until the damage is done.

The rule we use: if the agent's confidence in its action is below a defined threshold, or if the scenario type isn't in the training set, it escalates. Always. No exceptions for "just this once."

Failure Mode 3: Deploying on a Broken Process

An agent automates what's there. If the process it's automating is chaotic — inconsistent inputs, unclear ownership, missing data — the agent amplifies the chaos at machine speed.

Before you build an agent for a process, you should be able to write down that process in 10 steps or fewer. If you can't, the process isn't ready for automation. Fix the process first, then deploy the agent.

How to Choose Your First Agent: A Decision Framework

Don't start with the most impressive use case. Start with the one that has the clearest ROI and the least risk if something goes wrong.

| Criteria | Good First Agent | Bad First Agent |

|---|---|---|

| Task frequency | Happens 10+ times per day | Happens once a week |

| Output stakes | Low — mistake is reversible or caught by human | High — mistake causes customer or financial impact |

| Input consistency | Structured, predictable inputs | Free-form, highly variable inputs |

| Process clarity | Can be documented in 10 steps | Requires judgment and context most humans don't have |

| Measurability | Clear success metric (time saved, tickets handled) | Subjective or hard-to-measure success |

The top-right cell of your audit — high frequency, low stakes, structured inputs, documentable process, measurable output — is your first agent. Everything else waits.

Build vs. Buy: What Actually Makes Sense for Startups

You don't need to build a custom AI agent from scratch to start. For most startup use cases in 2026, the honest answer is: use an existing orchestration tool and add custom logic where you need it.

n8n is our default recommendation for workflow automation agents where the orchestration is clear and the integrations are standard. It has 400+ built-in connectors, runs on-premise if you need it, and is genuinely buildable by a non-AI engineer in a day or two.

LangChain + Python is the right choice when you need custom reasoning logic, complex multi-agent coordination, or integrations n8n doesn't support natively. More powerful, more maintenance overhead.

OpenAI Assistants API works well for single-domain agents where you want OpenAI to handle the context window management and tool routing. Good for prototyping fast; less flexible for complex multi-step flows.

If you have fewer than 3 engineers and no one with LLM experience, we work with startups to scope and build this without you needing to become an AI company to do it. Most first agents take 2–4 weeks to production-ready with the right engineering support.

What We'd Do Differently: Lessons from 30+ Agent Deployments

We've built AI agent systems for startups, agencies, and enterprise ops teams. Here's what we got wrong in the early days.

Mistake 1: Over-engineering the first version. We built a beautiful multi-agent orchestration system for a client whose actual problem was answering 20 types of customer questions. A single RAG agent with a good knowledge base would have done the job. We spent 3 weeks building something they didn't need yet. Start with the simplest thing that solves the problem.

Mistake 2: Skipping observability. You cannot improve what you cannot measure. The first version of every agent we build now includes a logging layer that captures: input, steps taken, output, confidence score (where available), and whether a human override was triggered. Without this, you're flying blind when something breaks.

Mistake 3: Treating "it works in testing" as production-ready. Test data is clean. Production data is messy. Allocate 30–40% of your build time to edge case handling, because that's where agents fail publicly. The support agent that handles 90% of tickets beautifully is remembered for the 10% it handled catastrophically.

The Takeaway

AI agents are not magic. They are a way to give a startup the execution capacity of a team twice its size — if you deploy them on the right problems, with the right design, and with appropriate human oversight.

The 40% failure rate isn't a technology problem. It's a planning problem. Teams that spend two hours clearly defining the task scope, the success metric, and the human handoff condition before they write a line of code have a dramatically better outcome than teams that jump straight to building.

Start with one agent. Define it precisely. Measure the result. Then expand. That's how you build an AI-augmented team that actually works — instead of an AI project that sounded impressive in a board meeting and quietly got shelved six months later.

If you're at the point of choosing where to start, our AI agents service page covers what we build and how we work. Or if you'd rather just talk through your specific workflows first, book a 30-minute call — no pitch, just a conversation.

Ready to Deploy Your First AI Agent?

We build AI agents and workflow automation systems for startups — from scoping to production. Most first agents are live in 2–4 weeks.

Show Me What Can Be Automated →Frequently Asked Questions

What is an AI agent for startups?

An AI agent for startups is software that executes a defined multi-step business workflow autonomously — reading inputs, making decisions, using tools, and producing outputs — without a human managing each step. Unlike chatbots that respond to single prompts, agents run complete workflows like lead enrichment, support triage, or ops data processing. They work best on high-frequency tasks with structured inputs and clear success criteria.

How much does it cost to build an AI agent for a startup?

A production-ready AI agent typically costs between $3,000 and $15,000 to build, depending on complexity, integrations required, and how many edge cases need handling. Simple workflow agents using n8n or OpenAI Assistants API are on the lower end. Custom multi-agent systems with complex orchestration and proprietary integrations sit at the higher end. Ongoing infrastructure costs are usually $50–$300/month depending on API usage and hosting.

Why do so many AI agent projects fail?

The most common reason is vague task scope — deploying an agent against a goal ("automate support") rather than a specific, bounded workflow with a defined start event, step sequence, and success condition. The second most common reason is no human handoff design — agents that hit edge cases with no exit ramp either fail silently or make confident mistakes. These are planning failures, not technology failures.

How long does it take to deploy an AI agent?

A well-scoped, single-workflow AI agent can be live in production in 2–4 weeks with experienced engineers. The first week is scoping and edge case documentation. Weeks two and three are build and integration. Week four is testing with real data, observability setup, and handoff to the team. Complex multi-agent systems take 6–12 weeks. Skipping the scoping phase reliably extends the timeline by 2–4 weeks as problems emerge during build.

What is the best AI agent framework for startups in 2026?

For most startups, n8n is the best starting point — it handles standard workflow automation with 400+ integrations and minimal custom code. For more complex reasoning or custom orchestration, LangChain with Python gives the most control. OpenAI Assistants API is good for single-domain agents where you want OpenAI to manage context and tool routing. The right choice depends on your workflow complexity, your team's engineering capacity, and how much custom logic you need.

Can a startup use AI agents without a dedicated AI engineer?

Yes, for simpler use cases. n8n and similar no-code/low-code tools let a technical generalist build and maintain straightforward workflow agents without deep AI expertise. For custom agents involving complex reasoning, multi-step orchestration, or proprietary integrations, you'll need at least one engineer comfortable with Python and LLM APIs — or an external team with that expertise to build and hand over a documented system.