Somewhere in a warehouse in Manchester, a pricing manager used to spend every Monday morning doing something painfully manual: opening 12 competitor websites in 12 different tabs, copying prices into a spreadsheet, and hoping he hadn't missed a flash sale that went live over the weekend.

By the time his team reacted to a competitor dropping prices, they'd already lost two days of sales volume to someone offering the same product for £8 less.

That's the problem a mid-sized UK e-commerce brand came to us with. They sold across categories — electronics accessories, home goods, personal care — over 8,000 active SKUs, competing against both large marketplaces and niche direct-to-consumer brands. Their pricing team was reactive, slow, and exhausted.

We built them a custom real-time competitor price monitoring system. This post is a full account of what we built, how it works, the mistakes we made along the way, and what it actually delivered.

The Problem in Detail

Before writing a single line of code, we spent time understanding exactly where the pain was. Three things stood out:

1. Delayed reaction time. By the time the pricing team noticed a competitor had dropped prices and got approval to respond, 24–48 hours had passed. In categories with thin margins and high search visibility, that window is everything.

2. Incomplete coverage. They were only manually tracking 6 of their top competitors. The other 6 — including a fast-growing D2C brand eating into two of their highest-margin categories — weren't being monitored at all.

3. No historical data. They couldn't answer basic questions: Does Competitor X always drop prices on Fridays before the weekend? Do they run seasonal discounts in predictable patterns? Without history, every pricing decision was made blind.

The goal we agreed on: monitor all 12 competitors, across all 8,000+ SKUs, with price data refreshed every 4 hours, alerts firing within minutes of a significant change, and 6 months of history available for trend analysis.

The Architecture We Built

Here's how the system is structured, from data collection to the dashboard the pricing team uses every day.

Layer 1 — The Scraping Layer

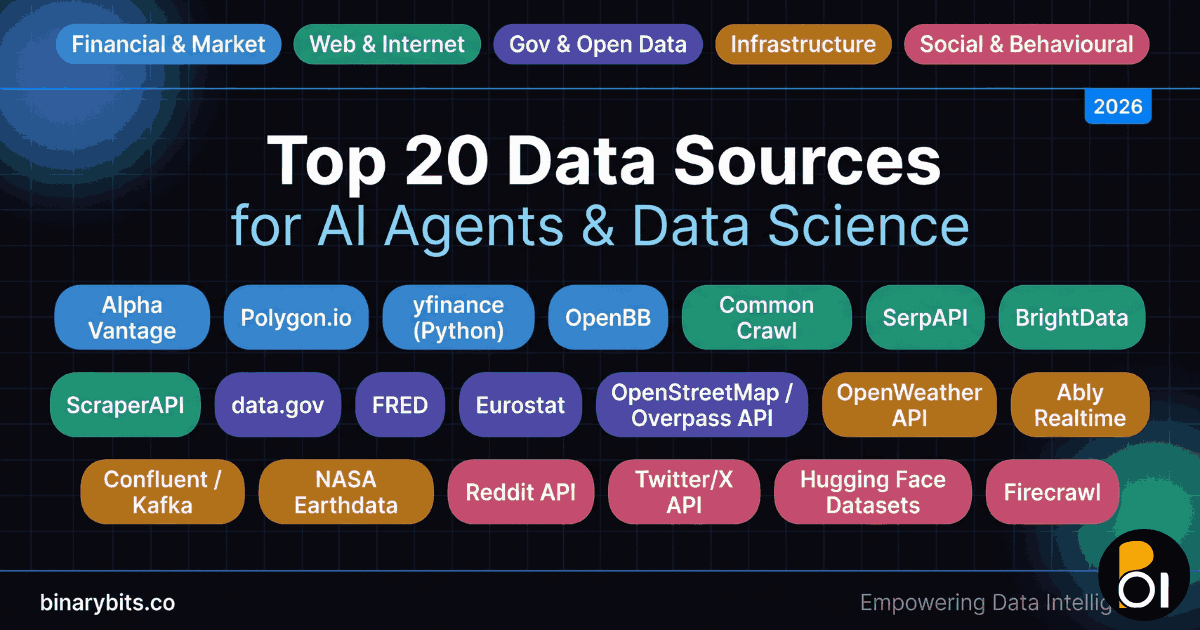

We built the scrapers in Python using a combination of Playwright for JavaScript-heavy sites and HTTPX with async for simpler HTML pages. Out of the 12 competitor sites:

- 7 were straightforward HTML — prices in the DOM, no JS rendering needed

- 4 used heavy JavaScript frameworks (modern JS framework storefronts) — required headless browser rendering

- 1 was a marketplace with an unofficial API endpoint we could call directly (much faster and more reliable than scraping HTML)

Each scraper is a standalone Python module with a consistent interface: it takes a list of product URLs and returns a structured JSON array of price records. This made it easy to add new competitors later without touching the rest of the system.

We ran scrapers inside Docker containers orchestrated by a simple job queue. Each competitor gets its own container, so a flaky site or a temporary block doesn't cascade and delay the others.

Layer 2 — Handling Blocks and Rate Limits

This was where we spent the most unexpected time. Three of the 12 sites had aggressive anti-bot measures — enterprise-grade bot protection, fingerprinting, and rate limiting that triggered after ~50 requests per hour from the same IP.

Our approach:

- Rotating residential proxies for the three protected sites — we used a paid proxy pool. The cost (around $80/month) is negligible compared to what bad pricing decisions cost

- Human-like request timing — random delays between 2–8 seconds, varying user agents, randomised viewport sizes in the headless browser

- Request fingerprint variation — rotating browser profiles to avoid consistent fingerprinting

- Retry logic with exponential backoff — if a request fails, wait 30 seconds, then 60, then 120, then mark it as failed and move on

After tuning this over two weeks, our successful scrape rate stabilised at 97.3% across all 12 sites.

Layer 3 — Data Storage

We used a two-tier storage approach:

PostgreSQL as the primary database — stores every price record with a timestamp, the product identifier, competitor slug, and raw scraped price. After 6 months this table holds roughly 28 million rows and still queries fast with the right indexes.

Redis as a cache layer — stores the latest price for every SKU-competitor combination. The dashboard reads from Redis, not Postgres, so page loads are near-instant regardless of database size.

SKU matching was the hardest part of the data problem. Competitor sites don't use your product IDs. We built a matching layer that maps competitor product listings to the client's internal SKU catalogue using a combination of EAN/barcode matching (where available), product title fuzzy matching, and a small manual review workflow for ambiguous matches. About 94% of SKUs were matched automatically. The remaining 6% went through a one-time manual review.

Layer 4 — Alerts and Notifications

Alerts fire when:

- A competitor drops a price by more than a configurable threshold (default: 5%)

- A competitor undercuts the client's price on a high-priority SKU

- A price that was previously unavailable (out of stock) comes back live

- A new product appears on a competitor site that matches a category the client sells in

Notifications go out via a team messaging webhook and email. The pricing team configured their own thresholds per SKU category — they wanted more sensitive alerts on electronics (where margins are tight) and less noise on home goods (where they have pricing flexibility).

Layer 5 — The Dashboard

We built a lightweight internal dashboard using a Python API backend and a lightweight JavaScript frontend. The key views:

- Price matrix — the client's price vs. all competitors, colour-coded (green = cheapest, red = most expensive)

- Price history charts — 30/60/90 day trend lines per SKU per competitor

- Alert feed — chronological list of all triggered alerts with one-click "acknowledge" and "update our price" actions

- Category summary — which categories are most price-competitive, where you're consistently losing on price

The Mistakes We Made (And What We'd Do Differently)

This section is the most useful part of any case study, so we're not skipping it.

Mistake 1: We underestimated SKU matching complexity.

We thought product matching would take a day. It took two weeks. Product titles across competitor sites are inconsistent, misspelled, truncated, or use different brand name formats. We should have scoped this as its own workstream from day one.

Mistake 2: We built the dashboard too early.

We started building the frontend before the data pipeline was stable. When the data model changed (which it did, twice), we had to refactor UI components we'd already built. In future projects, we validate the pipeline end-to-end before touching the frontend.

Mistake 3: We didn't account for site structure changes.

Two competitor sites updated their page structure during the project. Scrapers broke silently — they returned empty results instead of throwing errors, which meant we had a two-day gap in data before anyone noticed. We now build scrapers with mandatory validation: if the expected fields aren't present in the output, the job fails loudly.

Mistake 4: We started with too-frequent scraping.

Our initial spec called for hourly scraping. This triggered aggressive blocking on 4 sites within the first week. We backed off to every 4 hours and it solved most of the blocking issues. For most pricing use cases, 4-hour intervals are more than sufficient — prices rarely change multiple times per day outside flash sales.

What It Actually Delivered

We're being specific here because vague results are useless.

After 90 days of running the system in production:

- Pricing reaction time dropped from 24–48 hours to under 2 hours for high-priority SKUs

- Coverage went from 6 competitors monitored to 12, with 8,000+ SKUs tracked

- The team identified a pattern where one competitor consistently dropped prices on Thursday evenings — they now pre-emptively adjust high-visibility SKUs on Thursday afternoons

- One category (phone accessories) saw a 17% improvement in conversion rate after the team used price data to reposition 3 key SKUs they'd been overpricing against the market

- The pricing manager estimated saving 12+ hours per week of manual monitoring work across the team

The system has been running for over 8 months now with minimal maintenance. The main ongoing cost is the proxy service for the 3 protected sites.

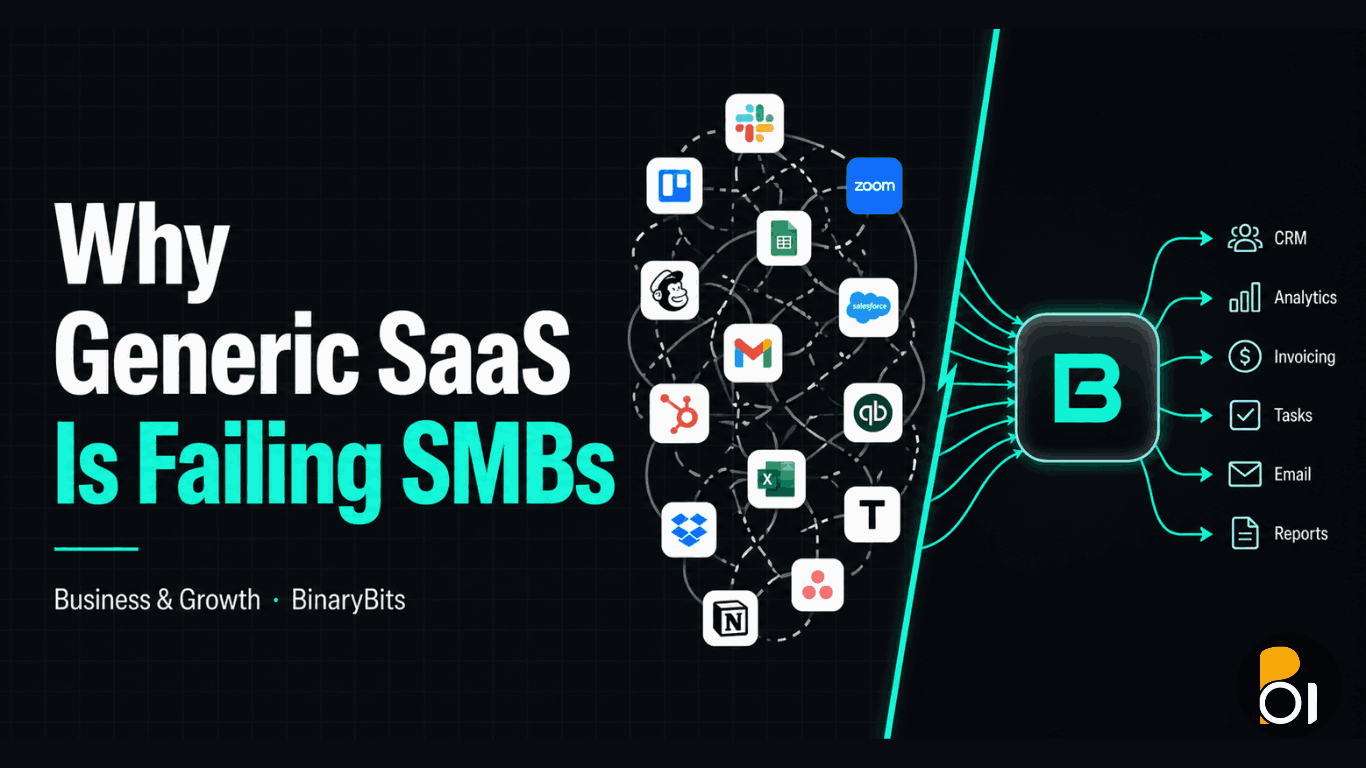

Should You Build This or Buy a SaaS Tool?

This is the honest answer: it depends on your scale and your specific situation.

Buy a SaaS tool if:

- You have fewer than 500 SKUs

- Your competitors are all on major marketplaces and retail platforms that SaaS tools already support

- You don't need custom SKU matching logic or proprietary site coverage

- You want to be up and running in days, not weeks

Build custom if:

- You have 1,000+ SKUs across multiple categories

- Your competitors run their own D2C sites not covered by SaaS tools

- You need the data to feed into your own internal systems (ERP, pricing engine, BI dashboard)

- You need custom alert logic, SKU matching rules, or pricing decision workflows

- Long-term, a custom build at this scale is cheaper than SaaS subscriptions

The client in this case had 8,000+ SKUs, 4 D2C competitors not covered by any SaaS tool, and needed the data to feed into their internal ERP. Custom was the right call.

The Takeaway

Competitor price monitoring isn't a nice-to-have for e-commerce brands competing at scale — it's table stakes. With over 78% of consumers comparison shopping for the best deal before buying, you're losing sales every day you don't know what your competitors are charging.

The manual approach stops working somewhere around 200 SKUs and 3 competitors. Beyond that, you either invest in a system or you accept that your pricing strategy will always be a step behind.

If you're at that inflection point — too large for spreadsheets, not sure whether to build or buy — we're happy to talk through your specific situation. No pitch, just a conversation.